Photo by Aaron Burden on Unsplash

A client asked me a question last month that I could not answer well.

"How do we know AI didn't copy from someone else — or just make the dosage up? Legal needs answers."

I build their content system. Dozens of health and wellness articles a month, written for professionals. AI drafts the first pass. Editors revise. The client publishes.

Their legal team had started asking the right question: what exactly is checking that AI didn't hallucinate a dosage, or copy three sentences from another site?

The honest answer was: nothing systematic. Editors caught what editors caught. Sometimes that was enough. Sometimes it wasn't.

So I built the systematic step.

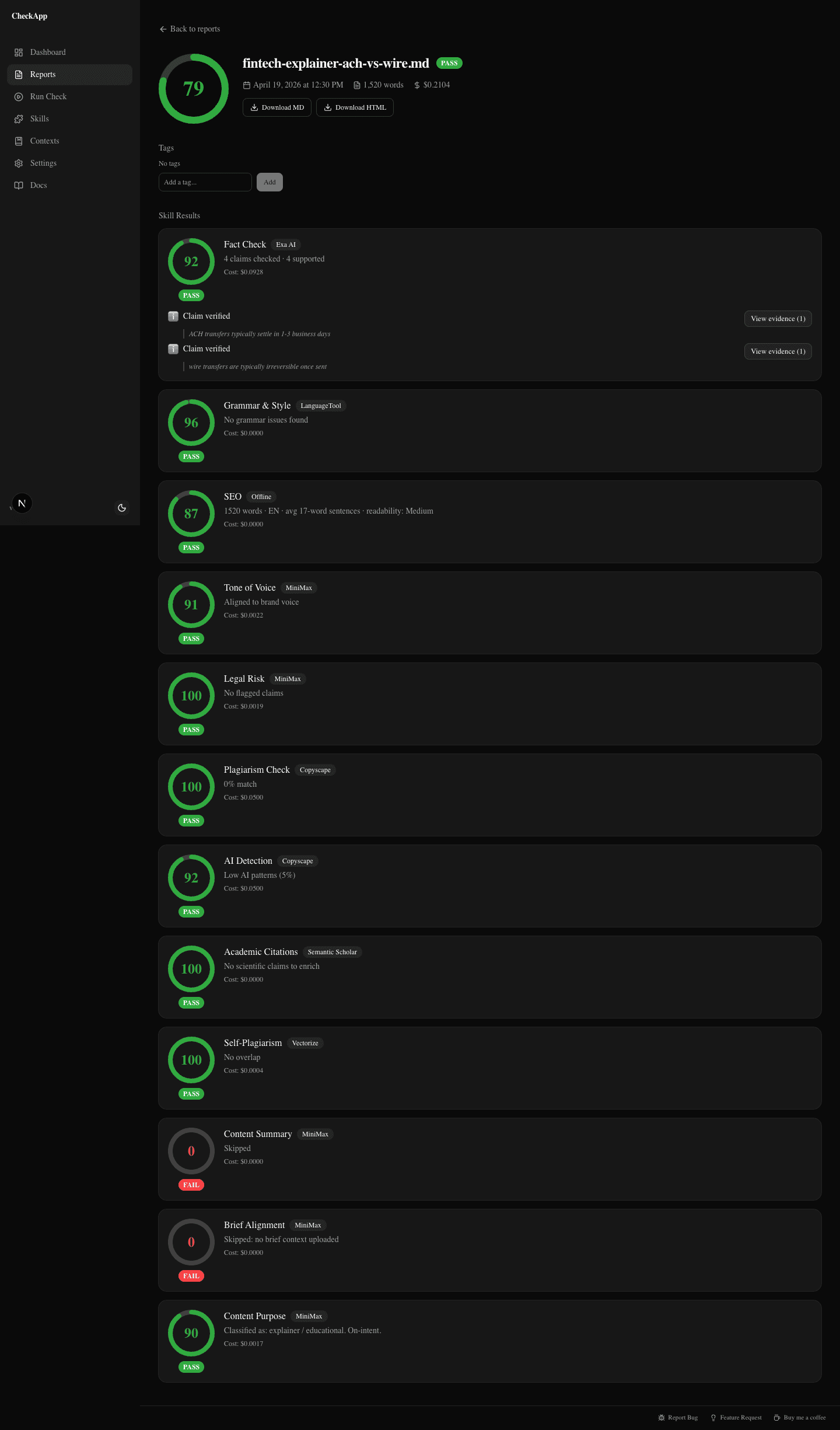

CheckApp is what came out of that conversation: an open-source quality gate that runs 12 checks on an article before publish. Plagiarism, fact-check, grammar, legal risk, tone, and seven more. It runs locally, finishes in under 90 seconds, and costs somewhere between nothing and 25 cents per article depending on which providers you configure.

This post is the story of why I built it, what it checks, and why I made it free, open source, and bring-your-own-key instead of turning it into another subscription tool.

Three Failure Patterns AI Content Reviewers Miss

I spent most of 2025 ghostwriting across fintech, wellness, and SaaS. By mid-year, AI was the default first draft across every client. Three failure patterns kept showing up in articles that had already passed human review.

Pattern 1: Factual drift. One health article bumped a vitamin C dosage from 100mg in the brief to 200mg in the draft. Not a typo. Not a rounding error. The model just generated a plausible-sounding number that happened to be wrong. Three reviewers signed off. The client almost published a dosage recommendation they had never agreed to.

Pattern 2: Accidental plagiarism. Another piece had a paragraph on antioxidants that read almost word-for-word like an existing wellness blog. Not a direct copy — close enough to get flagged if anyone ran it through Copyscape, which nobody was doing by default. Close enough to create a legal-dispute risk if the other site's lawyer ever noticed.

Pattern 3: Confident fabrication. "The average B2B buyer engages with 13 pieces of content before purchasing." That specific statistic, in a thought-leadership piece I was editing. No source. No paper. The number did not exist. It sounded right, which is exactly what LLMs are optimized to produce.

Each article looked clean on first read. Each one passed human review. Each one could have shipped. That was the uncomfortable part.

Why Grammarly, ChatGPT, and Copyscape Aren't Enough

Before I built anything, I did the obvious thing: looked at what was already out there.

Grammarly is excellent at grammar and style. It is not designed to check whether a dosage is right or whether a statistic has a source. That is fine. Grammar is not the same problem.

ChatGPT as a fact-checker is worse than useless when it runs without retrieval. Ask an LLM to verify a claim and it answers confidently. Ask it to cite sources and it can invent URLs that do not exist. Fact-checking is a retrieval problem, not a vibes problem.

Copyscape has a huge plagiarism index. But running it by hand on every draft is a manual step most teams skip, and plagiarism is only one failure mode.

Surfer and Clearscope optimize for keyword coverage against search intent. Useful, but not designed to tell you whether your claims are defensible.

Human review catches structure and flow. Three of my clients' editors caught every typo and awkward sentence in the examples above. They did not catch the fake statistic because it sounded credible. Human attention gets tired. It reads for argument, not provenance.

The gap was not any one tool. The gap was the missing pipeline before publish.

What CheckApp does

CheckApp is that pipeline.

One CLI command. One file or Google Doc URL. One report that answers: is this publishable?

npm install -g checkapp

checkapp --setup

checkapp article.md

Under the hood, 12 skills run in parallel. Each one owns one dimension of the publishability question.

The report shows the overall score, the verdict, and the per-skill findings: the line flagged, the severity, and the source evidence when it exists.

The 12 skills

Not all of these run by default. Some are offline or free-provider checks. Others require an API key from a provider you choose. CheckApp does not provide API keys. It uses yours.

| Skill | What it checks | Default engine | Cost per check |

|---|---|---|---|

| Plagiarism | Full indexed web for copied passages | Copyscape | ~$0.09 |

| AI Detection | Probability the text was AI-generated | Copyscape | ~$0.09 |

| SEO | Word count, headings, readability, keywords, links | Offline (no API) | Free |

| Grammar & Style | Grammar + per-finding rewrites | LanguageTool (free tier) | Free |

| Academic Citations | Merges citations onto fact-check findings | Semantic Scholar | Free |

| Self-Plagiarism | Overlap with your own past articles | Cloudflare Vectorize | ~$0.0001 |

| Fact Check | Retrieves evidence for claims; assesses confidence | Exa Search / Exa Deep Reasoning / Parallel Task | $0.008–$0.03 per claim |

| Tone of Voice | Compares against a brand voice guide; returns rewrites | Claude / MiniMax | ~$0.002 |

| Legal Risk | Scans for health, defamation, false-promise claims | Claude / MiniMax | ~$0.002 |

| Content Summary | Extracts topic, argument, audience, tone | Claude / MiniMax | ~$0.002 |

| Brief Matching | Coverage vs. an uploaded content brief | Claude / MiniMax | ~$0.002 |

| Content Purpose | Classifies article type; flags missing elements | Claude / MiniMax | ~$0.002 |

Two of these are worth calling out individually.

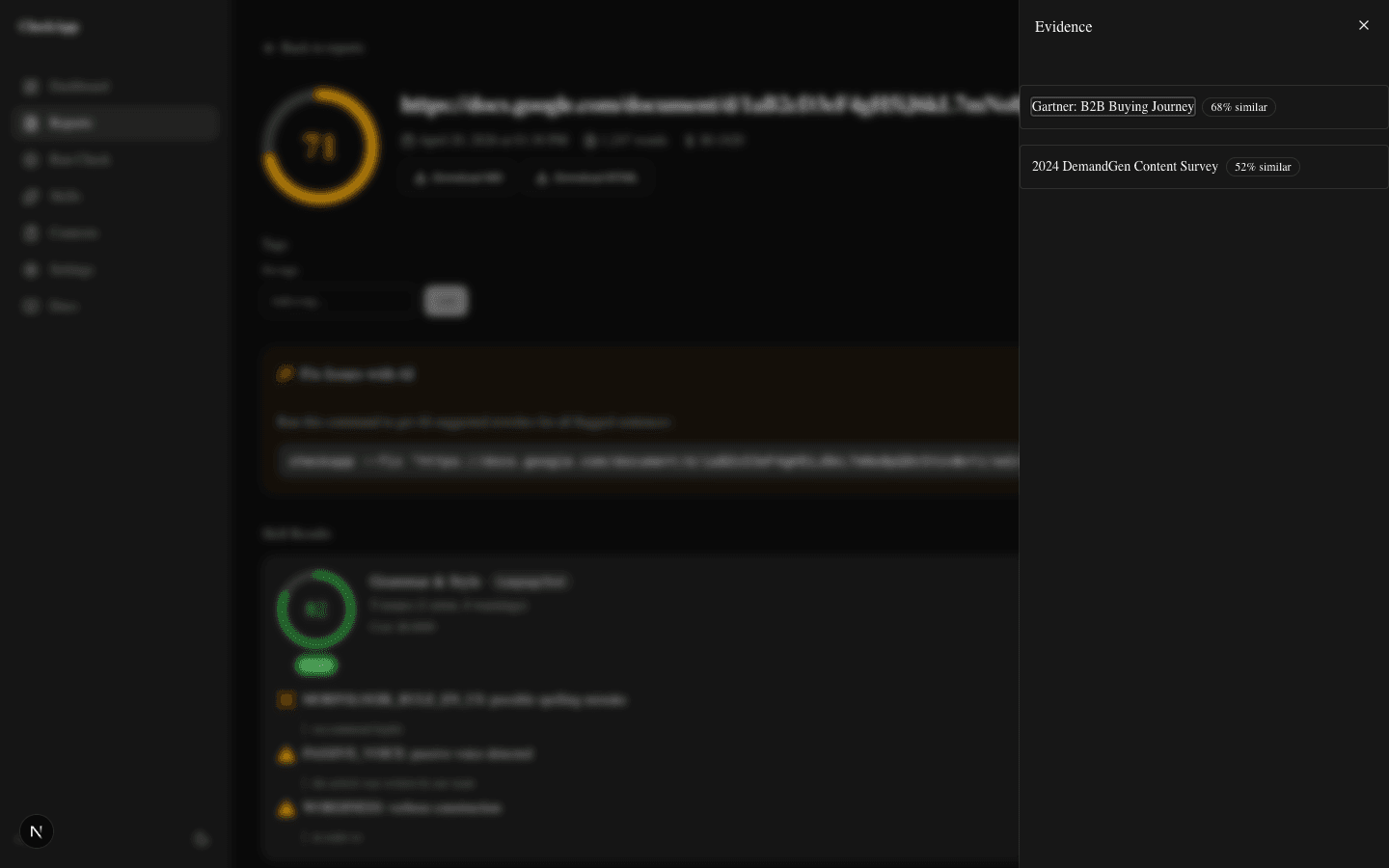

Fact check is where the most interesting work happens. The skill extracts factual claims, sends each one to a retrieval provider, and returns sources with relevance scores and a confidence assessment. If the claim is "the average B2B buyer engages with 13 pieces of content before purchasing," the skill tries to find a source. If it cannot, that is a finding worth seeing before publish.

Self-plagiarism is for people publishing under the same byline or agency on a regular cadence. You run checkapp index <dir> once to ingest your own past articles into a local vector index. After that, every new piece gets compared against your archive. It catches the subtle "I already said this three months ago, almost word-for-word" pattern that normal plagiarism tools miss.

Want to try it on your next draft?

npm install -g checkapp checkapp article.mdOr start at checkapp.xyz if the terminal isn't your thing.

How It Runs

There are three surfaces, but one pipeline.

Terminal. checkapp article.md is the default. Drop in the file path, get a report, and exit with a non-zero code on failure if you want to use it in CI.

Dashboard. checkapp --ui starts a local web server at localhost:3000. The dashboard shows report history, provider configuration, cost tracking, and exports. No account, no login. It writes to SQLite locally.

MCP server. For Claude Code or Cursor users, CheckApp exposes an MCP server. check_article becomes a native tool in the agent workflow, so the same agent that drafts can also check before handoff.

All three surfaces use the same pipeline. The CLI is the ground truth.

The cost reality

"Free, open source, BYOK" sounds like positioning copy, but in this case the details matter.

Free means CheckApp itself costs nothing. The CLI, dashboard, and MCP server are MIT-licensed. No subscription, no per-document fee, no login.

Open source means the code is at github.com/sharonds/checkapp. You can read it, fork it, modify it, run it offline, and audit what it does with your content. Nothing leaves your machine except the API calls you choose to enable.

BYOK — Bring Your Own Keys means that when you configure a paid skill (fact-check via Exa, plagiarism via Copyscape), you use your own API keys. You pay the providers directly. CheckApp takes zero margin.

Three cost tiers exist in practice:

- $0.00 — LanguageTool free tier for grammar, Semantic Scholar for citations, offline SEO. This is a real, useful check that doesn't require a single paid key.

- ~$0.05 per article — Add Exa Search for fact-check and Claude Haiku for LLM skills. This is the configuration most writers land on.

- ~$0.25 per article — Add Exa Deep Reasoning, Sapling for premium grammar, Copyscape for plagiarism. This is the "nothing gets through" configuration for regulated-industry content.

This is not a competitive shot at Grammarly. Grammarly is excellent at grammar. The point is that BYOK removes the platform markup that SaaS pricing depends on. You pay the providers, not the wrapper.

Who it's for

Three kinds of people have the same underlying pain: they publish work they will be judged on, and AI makes it easier to ship something plausible but wrong.

Professional and agency writers need a systematic step between "writer done" and "editor starts." The vitamin C example was not an edit-round problem. It was a trust problem.

Marketing and content teams are shipping 5–20 pieces a month under tight timelines. A made-up statistic in a founder byline is not just a bad sentence. It becomes a credibility problem for the person whose name is on it.

Anyone publishing in regulated spaces has less room for "we will fix it later." Health, finance, legal, insurance, and supplements content can turn one wrong claim into a regulator letter or legal-dispute email.

If you're a Claude Code or Cursor user, there's a fourth flavor: the MCP server makes check_article a native tool. Your agent drafts, your agent checks, your agent reports — all inside the same session.

Why Open Source Matters Here

Most AI content tools are SaaS. Sign up, pick a tier, connect your account, pay monthly. That model exists because hosting, support, and onboarding cost money, and the markup pays for those.

CheckApp does not have those costs because it runs on your machine. No hosted processing layer. No onboarding funnel. Just a CLI you install once and API keys you already have or can get in 10 minutes.

That also means if I disappear tomorrow, CheckApp still works. The code is on GitHub. The providers are standard APIs. There's no platform to sunset. If you want to fork it and build your own version, the MIT license explicitly says you can.

There is another consequence worth naming: I build CheckApp based on what people use it for, not what they pay for it. There are no feature tiers to optimize and no upsell ladder to protect. If you open an issue describing a skill you want, it gets weighed on merit.

I shipped it publicly because the interesting content quality work happens in the open. The full backstory and deeper technical posts live at checkapp.xyz/blog, including the fact-check pipeline, BYOK economics, Studio roadmap, and a case study on five client articles.

Try It on the Next Draft

If you publish content that you'll be judged on, here's the smallest useful thing to do today:

npm install -g checkapp

checkapp --setup

checkapp article.md

Start with the free providers. LanguageTool for grammar, Semantic Scholar for citations, offline SEO. That's a useful check for zero cents. Add Exa Search when you're ready to spend a few cents on fact-check.

If the terminal isn't your thing, the dashboard runs at localhost:3000 after checkapp --ui.

The repo and deeper product notes live here:

- Product: checkapp.xyz

- Repo: github.com/sharonds/checkapp

- Blog with 9 deep-dives: checkapp.xyz/blog

Open an issue if you hit a bug or want a skill added. CheckApp v1.2.0 is the checker: finish writing, run it, fix what it finds. Phase two is Studio, an inline editor where findings appear while you write. For now, the useful thing is simpler: run the checker before the next draft leaves your hands.

Related reading on this blog: What Is OpenClaw — the personal infrastructure layer I run alongside CheckApp; How I Built an AI Agent That Finds Warm Leads While I Sleep — a different slice of the "AI as infrastructure" thesis.